In today's app-store-driven business environment, many traditional hardware companies find themselves in a position where their hardware products, which were once the essence of the business, are now merely a vehicle to carry software products and services.

Apple changed the world of mobile phones with the iPhone. This once hardware-focused product category suddenly had customizations added in an aftermarket app store. New features and functionalities became delivered over the air. Over the past decade, Tesla has pushed a similar paradigm shift into the automotive world. In their 2030 Strategy, Volkswagen Group, which many regards as Champions of platforms, are clearly indicating that the unified software platform is just as important as the unified mechatronics platform.

In fact, many ex-hardware business executives witness that their hardware business is turning into a software business. Once relying on world-class mechanical engineers, companies nowadays have more coders than CADers.

Since so much of the customer value, customization, and performance now is software-driven, more focus is put on the software architecture, and rightly so. Companies carrying a legacy of multiple software monoliths, each developed to support a specific product platform, are facing a complex challenge – how to transition from the existing state to a new state where a single unified, efficient software architecture can be utilized to create maximum performance, customer value, and minimizing development work and time?

This blog will share fundamental best practices for software architecture, especially in products that combine hardware and electronics with software. But first, let's look at some significant challenges that hardware companies face today related to software.

Typical Symptoms of Poor Software Architecture in Hardware Companies

This chapter will describe some typical pain points in companies where the software architecture has been developed organically over time without a clear strategy.

Speed

Since there is no strategy for disconnecting different software parts, all software is delivered "all at once" in a monolithic fashion. All features need to line up to be released. Any delay in a feature deemed critical to the release delays the entire release. Development and innovation speed is low since all the code needs to be re-tested and verified for every release, even if it wasn't changed. Releases are enormous, and the test effort is equally significant.

You can think of this as a line of cars at a traffic incident. All lanes are squeezed into one to pass the incident site, creating a massive queue of cars with the induced risk of creating more accidents..png?width=300&name=Picture1%20(8).png)

Figure 1. Features line up and wait for a release

The speed problem worsens if multiple software platforms implement the same features but towards different hardware platforms. This issue means that the test and verification effort must be made for each combined software/hardware platform. The extra effort will further delay a feature rolling out to all the applicable software platforms. Suppose there are slight differences in the implementation for each software platform due to hardware differences. In that case, this induces re-implementing the same feature repeatedly. In the worst case, some software features released in the lasted hardware will never reach the older hardware. There is no way to justify legacy hardware development and test effort, leaving existing customers behind.

Figure 2. Multiple software platforms implementing similar functionality

Cost

Another problem with monolithic releases is that it can be tough to predict what an individual feature will cost to develop. Feature development relies so much on a larger integration and test effort for the entire system. Feature costs tend to be under-estimated since much of the costs are covered in another project taking care of the complete integration, i.e., an "release project." The transparency of the "release project" is low, and management often believes that they are throwing money into a black hole without any idea of what they get for the money.

The costs related to finding and fixing bugs are also hard to calculate due to the same reason. There is no good way to calculate the hidden costs associated with the complexity growth in the code since the dependencies in the software system aren't clear.

Quality

The effort to ensure that the products have a sufficient quality is typically very large and time-consuming in monolithically released products. It is not unusual to see test and verification work between one to several months with huge system test efforts requiring lots of physical hardware to test on. This issue is partially caused by a lack of understanding of what parts of the system a change will affect. But also, because the solution space is so large, it is impossible to test all aspects of the system. Therefore, everything that has been known to go wrong previously when a change failed is re-tested.

Since the speed of doing big releases is slow, it is tempting to do "small" releases for some features and bug fixes. In this case, only selected tests are run to save time. These small releases can often backfire since the side effects of the changes are not well known. A small bug fix in one place can easily create a ripple effect somewhere unexpected. For instance, new features might consume memory or CPU resources that some unrelated sub-system relied on.

Not scalable

It is not unusual to see scalability problems in software systems growing organically over time. The effort to take advantage of new hardware capabilities, such as a larger memory or increased number of computing cores, cannot be efficiently harvested without a considerable development effort.

Also, scaling the system to a smaller footprint can be a problem. Since the hardware requirements of the code are not well documented, it requires an extensive effort to squeeze the software into a smaller hardware footprint. Business-critical features might have to be taken out to achieve the necessary software footprint, creating an uncompetitive product on the market.

Not resilient / High coupling towards hardware

Many times, the software has a high coupling towards the hardware, making it sensitive to hardware changes. This dependency has been very evident recently with the recent global microchip shortage problem that started with the Coronavirus pandemic in 2020, with ripple effects still being felt.

Many companies have kept pushing old hardware designs to the market with chip designs that are 15 years old. Those companies are now forced to introduce modern chips instead since no suppliers want to create the 15-year-old chip designs. They are faced with complete software rewrites due to many "bare-metal" hardware designs (implementations without operating systems).

Six Best Practices for Software Architecture in Hardware Companies

Software modularity is a measurement of how well the software is decomposed into smaller pieces with standardized interfaces. The idea is to create software products by combining reusable chunks of code. So that you only implement, test, and deploy a feature or functionality once and then maximize reuse across the hardware product portfolio.

Most professional software is modular in one way or another already. Modular programming was, in fact, created in the 1960s. However, many companies fail to exploit the full potential of modular software architecture and, as described above, release the software in monolithic distributions. This chapter will share some best practices for modular software architectures.

1. Modular rather than Monolithic Software Architecture

Suppose the monolithic software can be split up into modules. In that case, a faster way of developing, testing, integrating, and releasing it can be obtained. Features can be released when they are ready, without affecting each other, and bugs may be fixed without risking side effects that ripple thru the system.

In the earlier car accident example, modularity means adding more lanes to the road, allowing cars to pass when there is an accident in one of the lanes.

.png?width=501&name=Picture3.1%20(1).png)

Figure 3. Modular software can be released in parallel

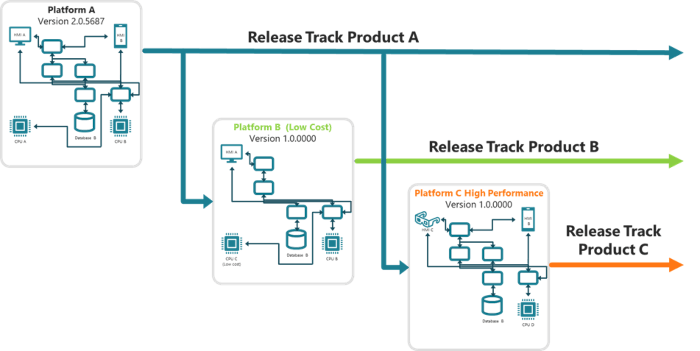

Instead of having one software platform per hardware platform, you can create one modular platform that supports multiple hardware products and maximize reuse. Each module can have its own release cycle allowing for slow releases of mission-critical features, such as car drive-by-wire features, and fast releases for User Interface improvements.

.png?width=700&name=Picture4.1%20(1).png)

Figure 4. Modular release tracks with maximized reuse

2. Strategic Software Modules and APIs

Many companies have software that is modular in one way or the other already due to:

- Object-Oriented Design implementations

- Classes, Macros, and templates

- Components and sub-systems - often based on hierarchical structures in the company, i.e., according to Conway's law

- Programming Language differences - C++ and HTML don't blend well

- Operating System differences - code written for FreeRTOS and iOS don't mix well

- Hardware dependencies – computation is split across several physical hardware nodes

With that said, what is the optimal way to divide code into modules? One approach is to think of the strategy of the module and its interfaces.

There can be software that doesn't need to be changed often, and APIs are used widely throughout the system. Changing that code might be expensive. Since it usually isn't customer-facing, customers might be less willing to pay for advancements here. There is no need to have variance here. This code can be clustered into "Operational Excellence" modules. It is a backbone service that doesn't need to vary in the system and could be reused.

There can also be software that is necessary to be relevant on the market, to comply with customer needs and expectations. Customer needs vary depending on region, country, and type of industry. Here there might be a need to support a lot of variance so that you are relevant in many markets. This type of code can be clustered into "Customer Intimacy" modules.

Lastly, there is software defining you as a company or seeing a huge development push happening throughout the industry. There can be a need to do frequent updates of this technology to stay relevant on the market. This code is also something the customer might be willing to pay extra for. This code can be clustered into "Product Leadership" modules.

.png?width=700&name=Picture5.1%20(1).png)

Figure 5. Apply a strategy to your modules

3. Software Portability

When software and hardware are combined, software portability might be a key target of modularity. Software portability is defined as software independent of the hardware it runs on. This independence can be achieved with carefully designed interfaces that abstract the hardware level from the application level. If done right, you can control which parts of the software can be carried over to a new hardware platform and which parts need to be adapted.

4. Automated Testing

For software that is changed often, testing is typically a significant concern. Automated testing enables you able to test faster and spot issues earlier. This way, less time and resources can be spent integrating the changes. Automated testing is something that can be achieved better with smart software modularity. By isolating changing parts with robust interfaces, we can test smaller chunks which is faster both in testing and in finding bugs.

5. Over-The-Air (OTA) Update Capability

For software where updates and features are implemented on an installed base, Over-the-Air (OTA) update capability is the tale of the century. To push software updates in a controlled and efficient matter, it is key to separate modules meant to be updated often from critical modules where the risk of change is much higher. Updating small chunks of the software is also faster and less resource-consuming.

Adding OTA update capability to your product opens the door to increased software quality and an aftermarket business model. It is also a fast and efficient way to combat cyber security vulnerabilities.

6. Refactoring rather than Rewriting

There are good reasons for companies to invest in new and improved technologies in software development. However, this should not come at the cost of throwing out fully functional code that can be refactored for reuse also in new products. After all, code doesn't age, but the world around it changes. Instead of rewriting code that is working today, try to figure out what is required from it tomorrow and create the interfaces needed to enable strategic reuse.

Three Great Examples of Software Architecture in Hardware Companies

Tesla

Probably the most well-known "software-defined architecture" is within the automotive industry. Features are continuously being deployed using OTA to the existing fleet, making the cars smarter and easier to use on a weekly basis. A powerful centralized computer contains most features and is over-specified for future demands, such as full self-driving. At Tesla, if a safety problem occurs, they can apply feature toggles to disable the affected feature remotely, avoiding large-scale recalls.

What might not be as well-known is Tesla's capabilities to monetize the data generated from their cars. Tesla generates close to $1200 per vehicle, selling software upgrades and digital services to their customers every year. A lot of this can be done thru data collection while the car is being used and then uploaded when the car has a Wi-Fi connection. Data collected allowed Tesla to improve the Model 3 braking distance (90-0 km/h) by 5 meters thru improved calibration of the anti-lock brake system and deployed OTA to all cars. Tesla also provides a lower insurance premium to car owners who drive safely, determined by collecting data from how the vehicle is being driven.

While there is a direct cost of increased hardware capabilities in computing, Tesla effectively extends its products' life and competitiveness and provides services that cover the extra costs, gaining an increased life-cycle value and happy customers.

iRobot OS

iRobot defined how a robot vacuum should look with its iconic round design. In fact, they did that so well that many people refer to a robot vacuum as a Roomba even though it didn't come from iRobot. While the latest versions might not be cleaning much better than the previous models (they are already great a that), they instead grow more intelligent in how to navigate your home every time it is being used thru the iRobot Genius software. The robots can already spot and avoid pet feces or phone charger cables, but more objects are added continuously. One example of this could be seen last Christmas when the robots could detect the Christmas tree and suggest a cleaning zone underneath it to keep the pine or spruce bars from piling up beneath it. The robots also suggest more frequent cleaning when dogs shed their winter fur or when the pollen season arises. The robots also integrate with all major digital assistants to enable voice commands. All these enhancements are being continuously deployed to the robots OTA when the robot is charging in its dock.

While there is stiff competition in robot vacuum cleaners, iRobot is competing with robust software and a great user experience that is much harder to copy than its iconic mechanical design.

ABB RobotWare

The modular range of robot controllers from ABB, OmniCoreTM provides a software platform (RobotWare) that can be run on the embedded robot controller and a standard PC. A concept ABB calls the Virtual Controller (VC). The benefit of this is that advanced and exact robot programming can be done even without the robot hardware, for instance, while it is still in transit from the production facility. When the robot finally arrives on site, you can quickly synchronize the program and configurations with the robot and start producing. The software is also modular, making it possible to improve the user interface or connectivity without affecting the real-time motion execution or the safety system. ABB has invested heavily in making the robots secure so that they can be connected to a cloud infrastructure to enable additional software services such as predictive maintenance, automated software backups, and fleet management.

Mechanical robot arms are a commodity, but ABB's advanced simulation capabilities and modular software architecture are a clear differentiator. One that is very hard for competitors to compete with due to the vast feature richness and strong software ecosystem.

Tips and Reminders Before you Embark on the Software Modularization Journey

There are so many aspects to think of when improving a software architecture that it may be hard to know where to start. Often this leads to developers start digging into the code and asking questions later. Here are some tips on what to think about before you come to that.

Tip 1: Software architecture should not remain an R&D-only concern

It is easy to think that software modularization is only an R&D concern. If you only get access to the most brilliant software engineers and architects in the company, you should be able to figure out how to make it happen. While this might be technically true, it is important to remember that the development budget is set by people outside of R&D. If they can't see or understand the value that this effort will bring to the company, the chances are that they will not grant this work the budget and commitment it needs.

Furthermore, if you can involve Sales, Supply Chain, Sourcing, and Service in the requirements discussion to understand their needs and pain points, the chances are that the modularization effort will be more critical for them too. Here are some examples: How can you create alignment between how you want to sell your product and how you realize it with architecture? If it is a company strategy to sell the product as-a-service or to sell aftermarket upgrades, the software architecture team needs to understand this! If there is a need from Supply Chain to change the supplier of an electronics chip quickly, the software architecture team needs to be on the ball!

Tip 2: Try to quantify the value the improved software architecture will generate

This tip is connected to the first tip because it is much easier to get everyone on board and aligned with the modularization work if you can point at the value that it will generate for the company. This effort requires some understanding of the cost drivers for software.

.png?width=700&name=Picture6.1%20(1).png)

Figure 6. The Main Cost Drivers for Hardware and Software

For software, Direct Material costs are usually not a large part of the total system cost. There might certainly be some software licensing involved. For medium-high volume products, software licensing is typically insignificant compared to direct material costs for hardware. Production flow is also not a major concern for software since software installation typically only involves a few steps in production. Supply chain efficiencies are usually not affected due to the digital economy and the Internet providing lightning-fast sourcing. Instead, it can be the other way around, where software can be a significant bottleneck for hardware supply chain efficiency. This issue has been proven exemplified by the post-pandemic chip shortage issues that have hit many hardware producing companies when attempting to switch to new chip suppliers.

For software, the Development costs, the Test & Verification effort, and the Maintenance & Support Costs are the most significant cost drivers of producing software products.

Therefore, it is important to focus on improvements in costing for these last 3 areas to gain a good understanding of the value that the module system could generate. What those gains are can be very different depending on the status of the current software architecture.

Examples:

- Are you spending development effort on overlapping work in multiple software platforms?

- Do you lack reuse, and are you implementing the same thing in several places in the code?

- Are you doing extensive manual tests & verification for every release? Could that be improved with smaller incremental releases instead?

- How many costs are related to finding and fixing bugs and deploying corrections?

Tip 3: Define KPIs early

There is an old saying in project management: "what isn't measured isn't followed." This means that it is crucial to follow the progress in the modularization work.

Make sure to develop some Key Performance Indicators (KPI) early in the modularization effort. Of course, what those depend on the goals for the project.

Here are some examples to get you going:

- Total build time for the software platform. (Can it be decreased thru parallel build pipelines?)

- Number of public API functions that are automatically tested

- Number of internal API functions that are automatically tested

- Total installation time for a software release

- Total installation time for a software fix/patch

- Total configurability of the system (number of software products that can be covered by the module system and the module variants)

- How fast can you correct a critical software defect in deployed systems?

- Amount of Engineering to Order development work

Summary

This blog has looked into the challenges and opportunities when hardware companies realize they should improve their software architecture and what we see as best practices to succeed. Being good at developing software is quickly moving from nice to have to business-critical for many previously hardware intense companies. Creating a software architecture based on strategic modular principles is not just an R&D concern but involves the entire company to be successful. When done right, you can create a business where better software can be released faster, with higher quality, and stay relevant on the market longer, building both company and consumer-friendly products.

Download Blog as PDF - keep in your back pocket or share with a colleague

Let's Connect!

Strategic Product Architectures is a passion of mine and I'm happy to continue the conversation with you. Contact me directly via email or on my LinkedIn if you'd like to discuss the topic covered or be a sounding board in general around Modularity and Software Architectures.

Roger Kulläng

Roger Kulläng

Senior Specialist Software Architectures

roger.kullang@modularmanagement.com

+46 70 279 85 92

LinkedIn